Multimodal prompt injection: attacks in images, audio, and video

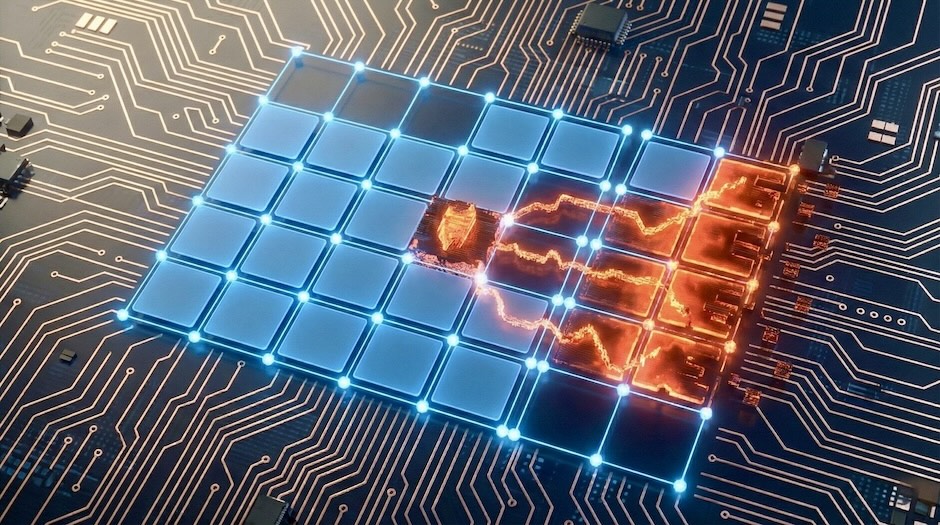

How attackers bypass text-based guardrails by embedding malicious instructions in images and audio, and the layered defenses required to counter them.

How attackers bypass text-based guardrails by embedding malicious instructions in images and audio, and the layered defenses required to counter them.

How attackers plant instructions targeting agentic AI systems today that execute weeks later, and the defense architecture that stops them.

How the shift from single-model LLM integrations to agentic AI systems amplifies prompt injection into a multi-step attack chain.